Introduction

Discussions about Computer Software Assurance often dwell on what clinical trial teams no longer need to do. The real question is what new demands arise in the CSV-to-CSA shift—especially when artificial intelligence now powers every significant trial system your team relies on.

The CSV Paradigm Worked — Until It Didn’t

For two decades, Computer Software Validation (CSV) assumed that comprehensive documentation built regulatory confidence. Installation Qualification (IQ), Operational Qualification (OQ), and Performance Qualification (PQ) packages were intentionally thick. Every function was tested with scripts. Every change meant re-validation. The documentation trail served as evidence.

However, this model was designed for the static, on-premise systems of the early 2000s. As technology advanced, it became misaligned with today’s clinical technology. Now, platforms are cloud-based, SaaS applications have continuous update cycles, and agile development pipelines are standard. Most critically, AI and machine learning systems are neither static nor fully deterministic. The one-size-fits-all documentation burden consumed significant resources but did not proportionately improve patient safety or data quality assurance.

Regulators responded to this shift. The FDA formally recognised the evolving reality. GAMP 5 Second Edition had already established risk-proportionate validation as the expected industry methodology. The FDA’s Computer Software Assurance final guidance, issued September 2025 (updated and finalised in February 2026), then formalised this shift at the regulatory level: from proving compliance through exhaustive paperwork to achieving assurance through proportionate, documented risk reasoning. Critically, the underlying purpose has not changed—systems must still perform reliably in regulated environments. What changes is how your team demonstrates that assurance.

What CSV to CSA Actually Requires You to Do Differently

CSA requires one key shift: apply risk proportionality. Before performing any assurance activity, your team must determine intended use, classify process risk, and select testing approaches accordingly. High-risk software functions—those that could compromise patient safety, product quality, or data integrity—warrant rigorous, scripted testing with detailed protocols and full traceability. Lower-risk functions can be addressed through exploratory testing, scenario-based methods, or documented reliance on vendor qualification evidence.

This means you must update your validation SOPs. Instead of starting with a protocol, start with a clear risk question: if this function fails, what happens to patient safety or data integrity?

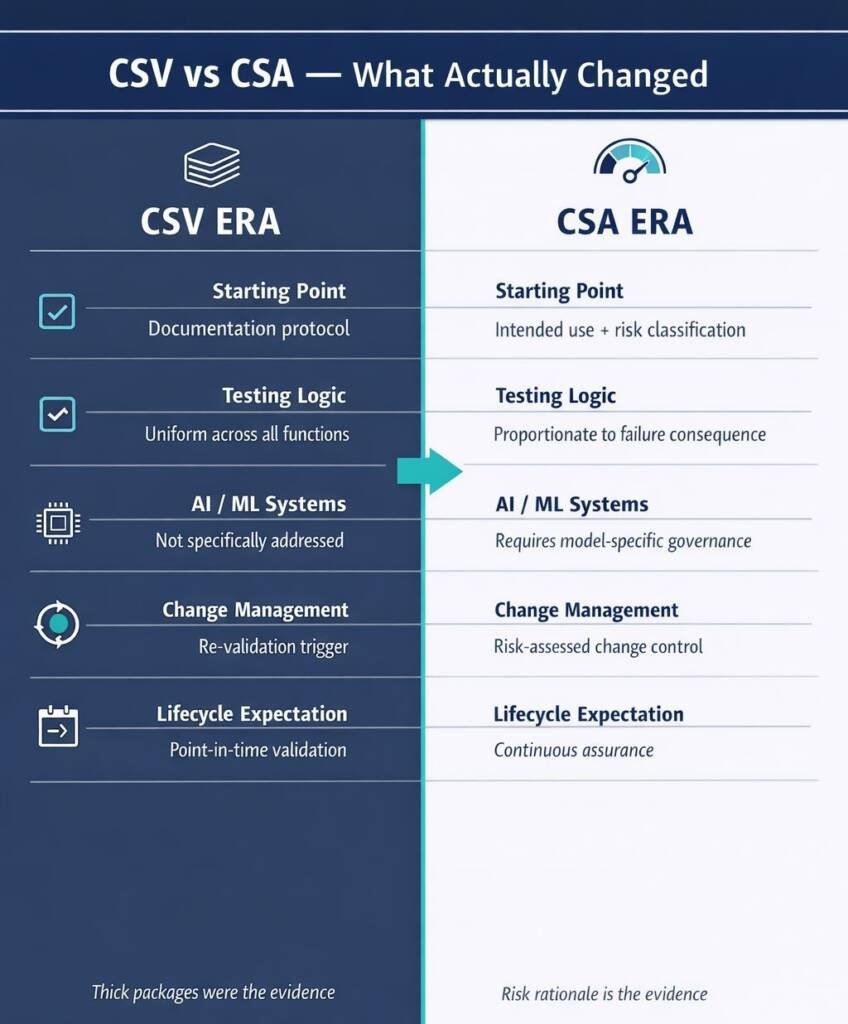

CSV vs CSA: A Practical Comparison

CSV and CSA Comparison

| Dimension | CSV Approach | CSA Approach |

|---|---|---|

| Starting point | Documentation protocol | Intended use and risk classification |

| Testing intensity | Uniform across all functions | Proportionate to failure consequence |

| AI/ML systems | Not specifically addressed | Requires model-specific governance |

| Change management | Re-validation trigger | Risk-assessed change control |

| Inspection evidence | Thick test packages | Documented risk rationale + targeted evidence |

| Lifecycle expectation | Point-in-time validation | Continuous assurance |

Two points are commonly misunderstood. First, CSA does not mean less rigour for high-risk functions — it means appropriate rigour across all functions. Second, the risk assessment itself must be documented and defensible. Auditors expect to see genuine critical thinking about where system failures could harm patients, not a paper trail that masks insufficient testing. For high-risk functions, including any AI model influencing patient eligibility, safety signal detection, or efficacy endpoints, documentation and testing standards remain stringent.

How AI Complicates the CSV to CSA Validation Equation

When AI is used in clinical trials, the transition from CSV (Computer System Validation) to CSA (Computer Software Assurance) becomes more complex. AI introduces two main challenges: non-determinism and model drift. Non-determinism means that AI and machine learning models can produce different outputs for the same inputs, which traditional validation protocols (IQ, OQ, PQ) do not account for.

The FDA’s seven-step credibility assessment framework addresses this by asking if there is enough evidence to trust the model’s output for a specific context of use (COU). Model drift refers to the decline in model performance as real-world data changes over time.

ICH E6(R3) highlights that validation is a continuous process, not just a one-time pre-deployment task. The joint FDA-EMA AI principles, released in January 2026, require ongoing monitoring and re-evaluation to manage data drift throughout the trial lifecycle. For sponsors, this means that being validated at launch is different from being validated later. Lifecycle

Data Integrity When the Data Comes from an AI Model

The ALCOA+ principles — Attributable, Legible, Contemporaneous, Original, Accurate, plus Complete, Consistent, Enduring, and Available — remain the regulatory benchmark for clinical trial data quality. They have not changed. Their application to AI-generated data, however, requires deliberate extension that most clinical data management teams have not yet built into standard operating procedures.

When an AI model produces an output — an imputed value, a flagged safety signal, a risk classification — the audit trail must capture which model version generated it, on what input data, at what point in time, and who reviewed and accepted the result. Without this chain, the output cannot satisfy the Attributable and Original requirements of ALCOA+. Under 21 CFR Part 11 and ICH E6(R3)’s data governance provisions, this is not optional. It is a condition of defensible trial evidence.

There is a further discipline that biostatistics and data management teams must build into standard study start-up: AI models used in efficacy or safety analysis must be pre-specified in the statistical analysis plan (SAP), with the specific model version documented and frozen before database lock. The EMA and the FDA explicitly share this position. Any post-hoc change to an analysis model creates material data integrity exposure that reaches from trial record integrity through to submission defensibility.

What Regulators and Auditor potentially will Look for in an Inspection

The convergence of FDA CSA guidance, ICH E6(R3), the FDA AI credibility assessment framework, EU GMP Annex 11 (revised draft 2025), the new Annex 22 on Artificial Intelligence, and the FDA–EMA joint AI principles creates a coherent inspection evidence framework. Regulators will ask to see specific documentation — and the framework is now mature enough that ‘we are still developing our approach to AI’ is no longer an acceptable response.

Inspection-Readiness Checklist — AI-Enabled Trial Systems

| Check | Requirement |

|---|---|

Five Priority Actions Before Your Next Trial Starts

For sponsors who have not yet updated their governance for the CSV to CSA era, these five actions address the highest-priority gaps:

Conclusion

The move from CSV to CSA is not about doing less. CSA enables greater focus on value-added activities, streamlining compliance by aligning efforts with documented risk reasoning for systems that are more complex, dynamic, and consequential than any clinical technology your original validation SOPs were designed to address.

For clinical trial teams operating under current regulatory expectations, this means updating how you classify risk, manage AI system lifecycles, maintain data integrity when AI generates evidence, and document human oversight at every decision point. Each of these has a specific, addressable governance requirement. None of them is speculative.

The regulatory framework exists. The FDA’s CSA guidance, ICH E6(R3), the FDA AI credibility assessment framework, and joint FDA–EMA AI principles define the standard. The real question: can your organisation prove its governance under scrutiny?

The CSV-to-CSA transition is your opportunity to do exactly that. Audit-ready from day one. That is the standard.

Common Questions and Answers

What is the key difference between CSV and CSA in clinical trials?

CSV applies uniform, documentation-heavy scripted testing to all functions, while CSA scales testing and documentation to process risk and intended use, focusing effort on high-impact functions.

Does CSA reduce validation documentation for clinical trial systems?

CSA reduces unnecessary documentation for low-risk functions but keeps stringent, well-evidenced validation for high-risk functions such as safety, eligibility, and primary endpoint-related software.

How does ICH E6(R3) affect validation of AI-enabled clinical trial systems?

ICH E6(R3) requires risk-based quality management, end-to-end data governance, and ongoing fitness-for-purpose, so AI systems need lifecycle monitoring, model-drift controls, and traceable data flows.

What is AI Context of Use (COU) in FDA’s AI credibility framework?

COU defines what the AI model does, which data it uses, and which decisions it influences; it underpins risk classification, test planning, and the credibility evidence regulators expect.

Can an AI model used in trial analysis change after the study starts?

Only with strict change control: models used for data transformation or endpoint evaluation should be pre-specified and frozen in the SAP; material changes can invalidate confirmatory analyses.

What does ‘human oversight’ of AI mean under FDA–EMA principles and the EU AI Act?

Sponsors must design workflows where qualified staff review, can override, and document decisions on AI outputs, with oversight intensity proportionate to model risk and impact on participants.

How should sponsors manage AI features embedded in vendor clinical platforms (CTMS, RTSM, ePRO)?

Sponsors need an AI inventory, clear vendor disclosure, change-notification clauses, access to validation evidence, and CSA/AI-specific checks in vendor qualification and ongoing oversight.

References

U.S. Food and Drug Administration (FDA) – Considerations for the Use of Artificial Intelligence to Support Regulatory Decision-Making for Drug and Biological Products

U.S. Food and Drug Administration (FDA) – Computer Software Assurance for Production and Quality System Software – Final Guidance

European Medicines Agency (EMA) – Guideline on Computerised Systems and Electronic Data in Clinical Trials

European Medicines Agency (EMA) – Reflection Paper on the Use of Artificial Intelligence (AI) in the Medicinal Product Lifecycle

European Commission – Proposal for EU GMP Annex 22: Artificial Intelligence in Good Manufacturing Practice Applications

U.S. Food and Drug Administration (FDA) & European Medicines Agency (EMA) – Guiding Principles of Good Artificial Intelligence Practice in Drug Development

Disclaimer

This article is provided for educational and informational purposes only. It is intended to support general understanding of regulatory concepts and good practice and does not constitute legal, regulatory, or professional advice.

Regulatory requirements, inspection expectations, and system obligations may vary based on jurisdiction, study design, technology, and organisational context. As such, the information presented here should not be relied upon as a substitute for project-specific assessment, validation, or regulatory decision-making.

We have no commercial relationship with some of the entities, vendors, or software referenced. Any examples are illustrative only, and usage may vary by organisation and their needs.

For guidance tailored to your organisation, systems, or clinical programme, we recommend speaking directly with us or engaging another suitably qualified subject matter expert (SME) to assess your specific needs and risk profile.

AI-Enabled Pharmacovigilance Services

At GxpVigilance, we help safety teams design and implement AI-enabled pharmacovigilance with the governance, traceability and human oversight needed for safe, scalable operations.

- AI use-case mapping for pharmacovigilance workflows

- PV-specific AI governance frameworks and oversight models

- Validation approaches, documentation and audit trails

- Human-in-the-loop safety workflows for controlled AI adoption

For more information, contact GxpVigilance to discuss how AI can safely scale your pharmacovigilance operations.