Governance First, Validation Always, Inspection Risk Reduced

Across Australia and New Zealand, quality managers, compliance leads, and pharmacovigilance professionals are facing increasing questions from leadership about artificial intelligence. Vendors are making their case, and competitors seem to be advancing. Amid boardroom enthusiasm and regulatory scrutiny, a critical question often goes unexamined: Is your organisation truly ready for responsible AI integration?

After all, AI readiness in GxP extends beyond securing a budget, selecting vendors, or launching a proof of concept. Regulated environments maintain strict obligations throughout digital transformation. The Therapeutic Goods Administration (TGA) and the European Medicines Agency (EMA) both state that AI systems in GxP workflows require governance, validation, traceability, and documented human oversight before implementation. Deploying AI without these foundations does not accelerate innovation; it increases inspection risk.

To support this, the article offers a practical framework to help you assess AI readiness objectively, ensuring your decision is well-founded—whether you proceed, pause, or start with a more limited scope.

Why This Question Matters Now for Smaller ANZ Teams

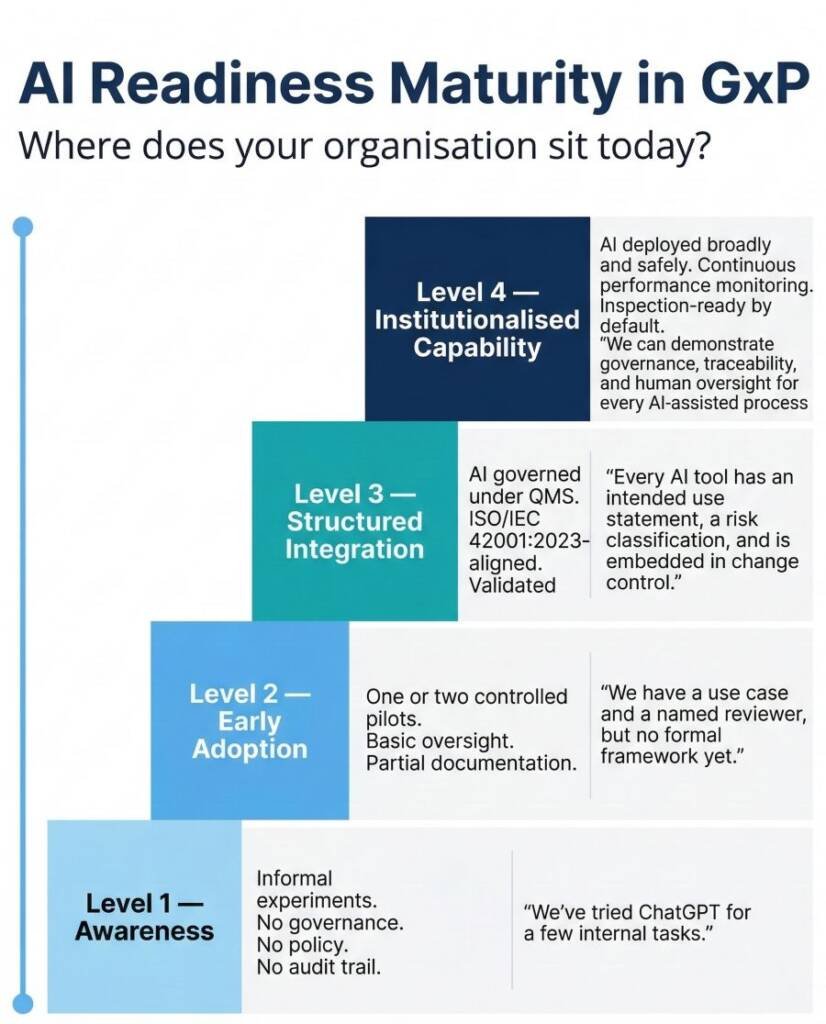

Conversations about AI readiness vary significantly by sponsor size and function. For large global sponsors with dedicated digital health teams, these discussions often focus on delivering projects quickly and at scale. In contrast, smaller ANZ-based sponsors, contract research organisations, clinical laboratories, wholesalers, and research institutions must address different stakes. Fewer internal specialists, lighter governance infrastructure, and less tolerance for uncontrolled implementation failures make the sequencing question — governance before deployment, not governance after — far more consequential.

Regulations are tightening. The EMA issued Annex 22 in July 2025, giving the first GxP rules for AI in manufacturing and distribution. The EU AI Act defines many pharma AI uses as high-risk. Australia’s privacy reform points to new rules for automated decisions. Smaller ANZ teams now have less room to test AI tools without policy or oversight.

For these organisations, GxP AI readiness starts with an honest assessment rather than buying software.

Readiness Is Not the Same as Enthusiasm

Budget approval, vendor selection, or a proof of concept do not mean you are ready. These only show interest and initial technical feasibility. They do not prove your organisation can operate, monitor, audit, and defend the system under regulatory scrutiny.

AI readiness in GxP requires clarity across dimensions beyond technology. Before any tool runs in a regulated workflow, you must document intended use, establish stable processes, validate and govern data sources, assign oversight with clear review duties, perform a proportionate risk assessment, and train people who understand what the system can and cannot do.

Many teams misunderstand the sequencing. They treat governance as an afterthought, to be finished after deployment. Regulators and auditors spot this immediately. If you retrofit governance after go-live, you make it harder to defend and sustain, and more likely to trigger corrective actions. Successful organisations in GxP build compliance infrastructure first, then deploy AI into it.

What Does AI Readiness in GxP Actually Look Like? A 10-Point Framework

A defensible readiness assessment covers ten criteria. Each reflects a question an inspector could reasonably ask during an audit of your AI system.

Missing two or three of these criteria before deployment does not just create compliance risk — it creates accountability gaps that are difficult to close retrospectively. If your organisation cannot answer each question with documented evidence, your AI readiness in GxP is not yet at a deployable standard.

When Should You Wait? Six Red Flags

AI readiness in GxP also means recognising the signals that indicate delay is the more professionally sound path.

- Unstable or undocumented processes.

- No agreed owner.

- Judgment-heavy decisions without human review.

- Black-box vendor tools.

- No change control plan.

- No parallel baseline.

Where Should Smaller ANZ Teams Start?

Better starting points are lower-risk, high-value applications where human review remains explicit and the failure mode is easily detected and correctable.

Drafting and summarisation support is one of the most practical entry points: AI-assisted generation of SOP first drafts, deviation storyboards, CAPA summaries, or training material outlines, with a qualified reviewer approving every output before it enters the quality system. Controlled knowledge retrieval — internal tools trained on validated document repositories, used to answer procedural queries — offers similar benefits, provided the human remains responsible for any action taken on the output.

Administrative support tasks, such as meeting summaries, regulatory change monitoring, and literature pre-screening, deliver genuine operational value without directly impacting regulated product decisions. These applications build AI readiness in GxP by allowing your team to develop oversight competence, identify edge cases in your specific operating environment, and generate the governance evidence needed before scaling.

Out of scope for this stage:

Any AI application that contributes directly to a regulated product or safety decision without robust human control in place.

This boundary protects the investment. Build confidence before you build scale.

What Must Exist Before Go-Live? The Minimum Governance Layer

Seven elements should be documented before any AI tool operates in a regulated workflow. Together, they form the minimum governance layer that allows your system to sit within your quality management system (QMS) on the same footing as any other controlled process.

- Intended use statement:

- Basic risk classification:

- Approval pathway:

- User guidance:

- Review requirements:

- Recordkeeping expectations:

- Escalation pathway:

Embedding these elements into your QMS before deployment means your AI tool operates within the same documented framework as every other controlled system in your organisation. Regulators will not encounter a governance gap. Your team will not face an accountability vacuum when something unexpected occurs.

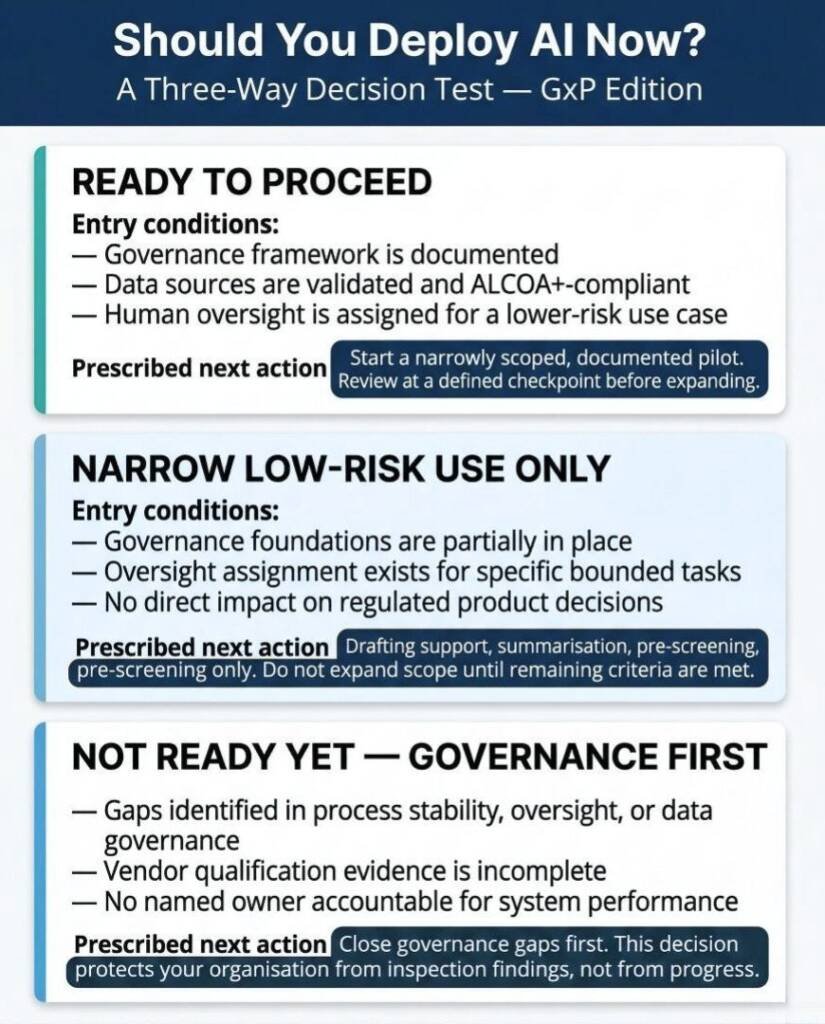

A Simple Closing Decision Test

After working through the readiness criteria above, most organisations fall into one of three positions.

READY TO PROCEED — Controlled Pilot

Governance framework is documented. Data sources are validated and ALCOA+-compliant. Human oversight is assigned for a lower-risk use case. A qualified owner is named and accountable.

Move forward with a narrowly scoped pilot. Document everything. Review at a defined checkpoint before expanding scope.

NOT READY YET — Governance First

You have identified gaps in process stability, oversight assignment, data governance, or vendor qualification.

Close those gaps before deployment. This decision protects your organisation from inspection findings, accountability failures, and erosion of trust in future AI adoption. Closing governance gaps is not a delay — it is the work.

NARROW LOW-RISK AUGMENTATION ONLY

Governance foundations are partially in place. You can proceed with clearly bounded, lower-risk applications — drafting support, summarisation, pre-screening — where human review is explicit and documented.

Do not expand scope until the remaining criteria are fully met.

AI readiness in GxP is not a destination you arrive at once. It is an ongoing calibration between what your governance infrastructure can support and what your operational context requires. The standard for that calibration is always the same: can you use this tool in a controlled, traceable, reviewable, inspection-ready way?

Conclusion

For smaller regulated organisations in Australia and New Zealand, being ready for AI in GxP starts with a simple question: Can you use this system with clear governance, reliable data, proper oversight, and records that stand up to inspection? If you cannot answer yes, it is best to focus on building a strong foundation before moving forward.

Put governance before automation. Make sure people oversee the process before you try to scale up. Taking a careful and defensible approach is more valuable than moving quickly, and in a GxP setting, it is the only way to protect your patients, your organisation, and your regulatory status.

When your governance is solid, you have strong governance, clean data, and your team knows their oversight roles, AI becomes what it should be: a tool that helps people make good decisions, reduces extra work, and makes audits easier. This is the standard to aim for. This is what real AI readiness in GxP looks like.

Common Questions and Answers

What is AI readiness in GxP, and why does it differ from general AI adoption?

AI readiness in GxP means your organisation can deploy an AI system in a regulated workflow with documented governance, validated data, assigned human oversight, and a defensible audit trail. General AI adoption focuses on capability and efficiency; GxP adoption requires evidence of control. The difference matters because regulators — including the TGA and EMA — assess AI systems against the same compliance obligations as any other computerised system in a regulated environment.

How does the TGA currently regulate AI tools in pharmaceutical operations?

The TGA regulates AI under its existing technology-agnostic frameworks, including the medical device regulatory scheme where applicable. The TGA closely monitors international developments from the EMA, FDA, and PIC/S, meaning guidance published by those bodies often signals future Australian expectations. Organisations should document intended use, conduct proportionate risk assessments, and apply existing ALCOA+ data integrity requirements to any AI system operating in a GxP context.

Can smaller ANZ organisations realistically implement AI in GxP workflows?

Yes — with appropriate scope selection and governance foundations in place. Smaller teams should begin with lower-risk, high-value applications such as SOP drafting assistance, deviation summarisation, or literature pre-screening, where qualified human review is explicit and failure modes are easily detected. These starting points deliver operational value while building the governance evidence and oversight competence needed for more complex future deployments.

What is the minimum governance layer required before any AI tool goes live in a regulated workflow?

At minimum, you need a documented intended use statement, a basic risk classification proportionate to patient safety consequences, a named approval authority, role-specific user guidance, documented review requirements, recordkeeping expectations that meet audit trail standards, and a defined escalation pathway for unexpected or unsuitable outputs. These seven elements should be embedded in your Quality Management System before deployment, not retrofitted afterwards.

What does EMA Annex 22 require for AI in GMP environments?

EMA Annex 22, published in July 2025, provides the first dedicated GxP framework for AI systems used in manufacturing and distribution. It focuses initially on tools using static datasets and requires documented intended use, performance specifications, data quality controls, traceability, change management, and mandatory human oversight for critical process decisions. Organisations operating in EU-regulated markets — or those whose regulatory strategies are influenced by EMA standards — should familiarise themselves with its requirements now.

What should we do if we identify gaps during our AI readiness assessment?

Treat identified gaps as a prioritised action list, not a reason to abandon AI adoption. Governance gaps — missing intended use statements, undefined oversight roles, weak escalation pathways — are typically faster to close than data quality or process stability issues. Document each gap, assign an owner, set a target resolution date, and schedule a reassessment before any deployment decision. If the gap is in your vendor’s qualification evidence, address that through the vendor selection process or seek an alternative provider.

How does the TGA view artificial intelligence in healthcare?

The TGA regulates artificial intelligence under its existing, technology-agnostic medical device framework. They expect documented intended use, rigorous risk assessment, and validation proportional to the potential consequences. Australia closely follows international developments, meaning EMA and FDA guidelines often signal future local expectations.

What happens to data integrity when using AI?

AI systems must adhere strictly to ALCOA+ data integrity principles, just like traditional software. You must ensure clear data provenance, maintain audit trails for AI actions, and validate training datasets. Poor data quality directly translates to significant compliance risks during regulatory inspections.

Is AI validation different from traditional computer system validation?

While the core principles remain the same, AI validation requires additional focus on model explainability and performance monitoring. Adaptive learning models pose unique challenges because they evolve post-deployment. Therefore, you must implement continuous monitoring and strict change control processes.

What is the most critical first step for AI readiness in GxP?

The most critical first step is establishing a documented AI governance framework. You must define clear policies for acceptable use, data privacy, and human oversight before deploying any tools. This ensures your team operates within safe, compliant boundaries from day one.

References:

- European Medicines Agency (EMA) – Annex 22: Artificial Intelligence – Draft for EudraLex Volume 4 Good Manufacturing Practice Guidelines

- European Commission / EMA – Good Manufacturing Practice Guidelines: Chapter 4, Annex 11 and New Annex 22 – Stakeholder Consultation

- Therapeutic Goods Administration (TGA) – An Overview of Software and Artificial Intelligence

- Office of the Australian Information Commissioner (OAIC) – New AI Guidance Makes Privacy Compliance Easier for Business

- Australian Government – Department of Industry, Science and Resources – Voluntary AI Safety Standard

Disclaimer

This article is provided for educational and informational purposes only. It is intended to support general understanding of regulatory concepts and good practice and does not constitute legal, regulatory, or professional advice.

Regulatory requirements, inspection expectations, and system obligations may vary based on jurisdiction, study design, technology, and organisational context. As such, the information presented here should not be relied upon as a substitute for project-specific assessment, validation, or regulatory decision-making.

We have no commercial relationship with some of the entities, vendors, or software referenced. Any examples are illustrative only, and usage may vary by organisation and their needs.

For guidance tailored to your organisation, systems, or clinical programme, we recommend speaking directly with us or engaging another suitably qualified subject matter expert (SME) to assess your specific needs and risk profile.